why we built diligence as a co-pilot.

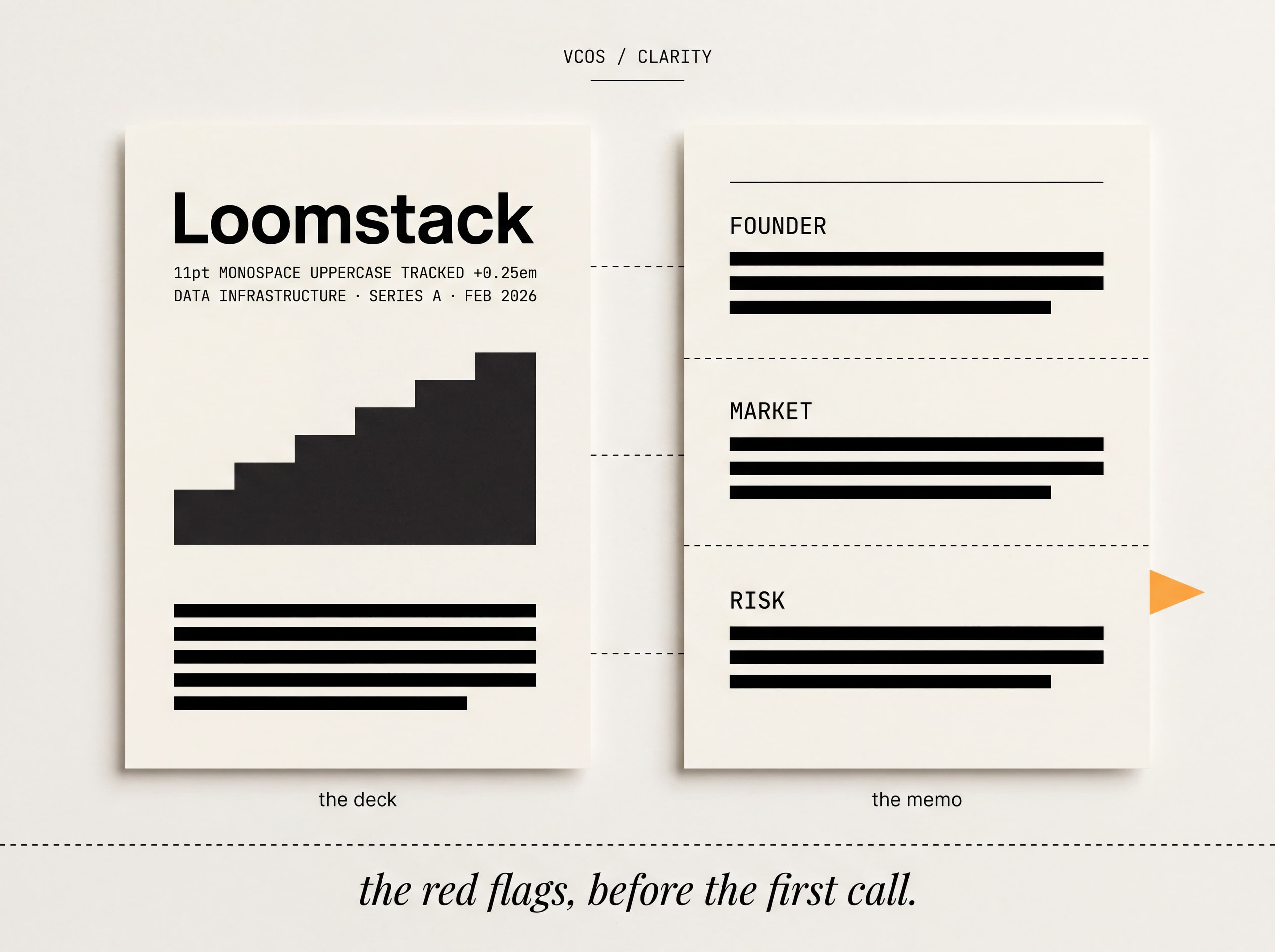

Founder, market, and financial signals from every deck and data room. The red flags surfaced before your first call.

Six weeks into building VCOS, we had a working prototype that could read a pitch deck and a founder transcript and produce a complete diligence memo. Sections, citations, red flags, the works. The model was good. We almost shipped it.

We did not ship it. The reason is the difference between a co-pilot and an autopilot.

automation's mistake.

The autopilot version of diligence is the one most teams build first. The model reads everything, makes a recommendation, and the partner stamps it. The output looks like a memo. The behavior is screen with confidence and pass to call. It is faster. It is also wrong, and not in the direction you might expect.

The mistake is not that the model is too pessimistic. The mistake is that the model is too systematic. A partner who trusts an autopilot ends up backing the median of what their thesis predicts. The exceptions, the surprising deals, the founders who do not pattern-match, lose. The fund's portfolio drifts toward consensus. Three years later the IRR matches the median of the vintage, which is not the business a venture fund is in.

a co-pilot pre-reads.

The co-pilot version of diligence does the structural work without making the call. It reads the deck and the transcript. It pulls the founder, market, and financial signals into a structured memo. It flags the inconsistencies between the founder's narrative and the financials. It surfaces the comparable deals from the fund's own history. It does not say yes. It does not say no.

The partner reads the memo and the source. The partner asks better questions on the founder call. The partner spots the second-order risks the model could not see. The partner makes the call. Crucially, the partner makes the call in less time than before, because the structural work has already been done.

the partner still decides.

the loomstack memo.

A useful example. A fund we worked with last quarter was looking at a Series A infrastructure company called Loomstack. The autopilot version of diligence would have produced a clean memo. Founder strong. Market large. Financials ahead of plan. Recommendation to invest. The co-pilot version flagged something different. The bottoms-up market sizing assumed a thirty-five percent data-team penetration rate by 2028. The model could not find independent benchmarks above eighteen percent. The flag read, simply, confirm in second call.

The partner asked the founder, on the second call, where the thirty-five percent came from. The founder did not have a great answer. The partner did not pass on the deal. The partner repriced. The fund led the round at a valuation that worked at eighteen percent penetration, not thirty-five. Two years later, the actual number is twenty-six. The deal is fine because the entry was right.

A pure autopilot would have backed the thirty-five percent assumption. A pure manual review might have missed the inconsistency entirely, because nobody had time to cross-check the bottoms-up against external benchmarks. The co-pilot did the work nobody else had time to do, and then got out of the way.

where the partner still decides.

Founder judgement. The shape of the round. The size of the position. The follow-on commitment. The ten things that show up in the second meeting that nobody could have anticipated from the deck. All of those remain partner work, and we have been very deliberate about not building features that try to automate them.

Conviction is the unautomatable part of venture. Everything else is overhead. Clarity exists because the overhead was crowding out the conviction. Now the conviction has room.